Migrating RDMs to VSAN (Part 2)

- khushnood khan

- Jul 24, 2021

- 2 min read

Updated: Jul 26, 2021

2)Migrating quorum Disk to File Share Witness

Quorum disk is the Shared disk for failover cluster.

Sharing can be either virtual or physical.With windows 2008 the need for quorum disk is not a mandatary. Insted we can use FSW (file share witness ).The FSW can be a standard Windows server on a vSAN datastore.

We have 2 vms participating in failover clustering.

We can see the clustered disk which is participating in quorum disk. (cluster disk 3)

Now the cluster Quorum disk will be converted to FSW Quorum.

1)right click on the cluster and click on more action >configure cluster quorum setting.

2)Click next on before you begin page.

3) Select the option "select the quorum witness"

4) click on Configure a file share witness and click next.

5)I have a openfiler file share already configured.

path for shared folder already shared and click on next.

6) clcik next

7)click finish.

7) we can see the witness file share as quorum.

3)Migrating VMs with shared RDMs to vSAN

We have two disks shared here as RDM for file server.

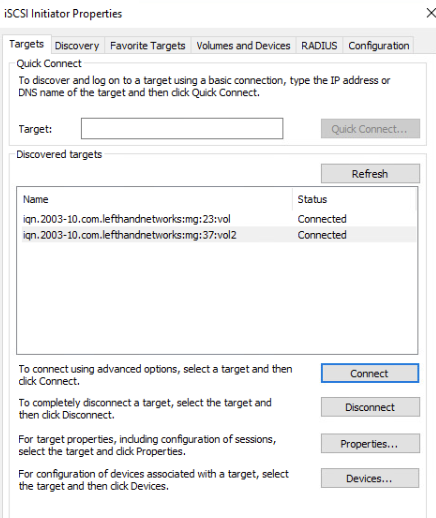

Here we will connect the iscsi san through in-guest iscsi initiator.

For that to happen we need iscsi network to be ready. In our case we will be using the production network as the iscsi san network also. In Production please add network adapter to the vm and use a different network .

1)On the windows machine go to start menu and click on iscsi initiator and click on yes on the popup.

2)Add the ip address of the storage in the quick connect.

3) We can see the all the luns discovered from the storage

4)For multipathing please check your vendor .

5) we would now migrate the RDM to in-guest initiator.

NOTE: It is advisable to take a maintenance window during the process.

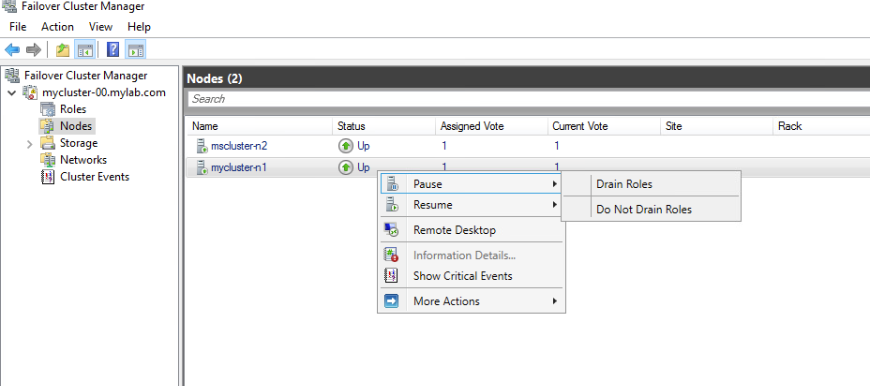

6) We would pause the node 2 for maintenance and choose drain role option.

7) power off the secondary vm.

And right click on the vm and click on edit setting.

8) Remove the rdm disks from the vm and make sure not to check the delete from datastore.

Also remove the scsi controller which is sharing the RDM.

9) Power on the vm now and launch iscsi initiator and connect all the targets.

10)Click on volume and devices and click on auto configure.

This will mount the devices.

11) On the disk manager we can see the disk listed but in reserved and offline state.

This is as expected as the server is not is role.

We would resume the role on the second node with do not fail roles back.

12)click on the roles and change the role to secondary.

13)we can see the disks came back as online and the letters are once again assigned.

14) Now we will pause the node one.

15) Shutdown the node one and delete the rdm disks and scsi controller as shown below.

16)power on the vm and connect the target as shown below.

17) under volume and devices click on auto configure to mount the disks.

18) we can see the disks there under reserved state.

19) Now we will migrate the vm to VSAN as previously done from 6.7 vcenter to 7.0 vcenter .

We need to note here for vsan migration only object has been moved to the vsan datastore .

The data is still there in the iscsi san storage.

Comments